A Practical Guide for GRC Team

Image by AI

In the modern enterprise, the Governance, Risk, and Compliance (GRC) function is undergoing a radical evolution. For decades, GRC has been characterized by the popular spreadsheets, yearly/periodic audits, and a “check-the-box” mentality. However, the sheer velocity of data and the complexity of global regulations have made these traditional methods obsolete.

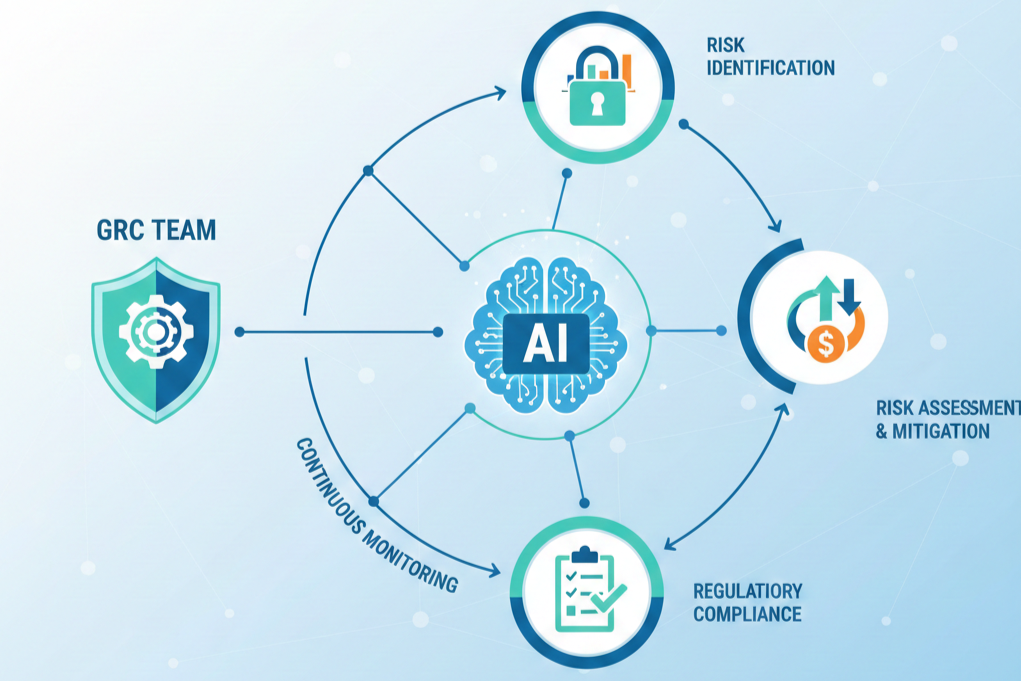

Today, the smart and modernized GRC teams are turning to AI to manage the overwhelming scale of modern business risks. By integrating Machine Learning (ML), Natural Language Processing (NLP), and Predictive Analytics into their workflows, organizations can move toward a “Continuous Compliance” model that identifies threats before they manifest.

The Current State of GRC and the Need for Transformation

Before we explore the technical implementation, we must acknowledge why the status quo is evolving. Most GRC teams currently operate in a state of “Information Overload.” The spreadsheets no longer give an overview of the relevant data, and even diagrams are becoming a chore to look at.

The Regulatory Explosion

In the past 2–3, we have seen a record-breaking number of regulatory updates across the globe on matters of AI and the implication of the adoption of it, from the EU AI Act to evolving ESG (Environmental, Social, and Governance) reporting standards, to the USA Executive Order 14179

Executive Order 14179

Executive Order 14179, issued in January 2025, reorients U.S. AI policy by revoking the 2023 Executive Order 14110 on “Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence.” Its core objective is to eliminate federal policies perceived as impediments to innovation and U.S. dominance in AI.

The order prioritizes national security by directing agencies to enhance U.S. dominance in AI technologies. While it doesn’t establish new cybersecurity requirements, it mandates that the federal government identify and remove existing policies that could obstruct the secure development of AI systems critical to national interests.

The order does not create new privacy standards but implicitly affects data governance by revoking previous directives, including those that emphasized data transparency and protection. This rollback may influence how federal agencies and private sector actors interpret and implement privacy safeguards in AI deployments.

A human compliance officer cannot possibly read, interpret, and map every single change to internal controls across a global enterprise.

USA: AI Bill of Rights

The Blueprint for an AI Bill of Rights, released by the White House Office of Science and Technology Policy in October 2022, outlines a set of five principles intended to guide the design, use, and deployment of AI systems in the United States. While non-binding, the blueprint provides a foundational framework for federal agencies, private companies, and developers to promote accountable AI practices.

The blueprint advocates for AI systems to be safe and effective, requiring pre-deployment testing, risk identification, and ongoing monitoring to ensure they operate as intended and do not cause harm.

It emphasizes that users should have control over how their data is collected and used, with built-in protections against intrusive surveillance and misuse.

The five principles are:

Safe and effective systems: AI systems should be subject to pre-deployment testing, risk identification, and ongoing monitoring to ensure they operate as intended and do not cause harm.Data privacy: Users should have control over how their data is collected and used, with built-in protections against intrusive surveillance and misuse.Notice and explanation: People should be informed when an AI system is being used and provided with clear explanations about how it functions and affects them.Human alternatives, consideration, and fallback: Individuals should be able to opt out of AI-driven processes and access human decision-making when needed, especially in high-stakes contexts.

Though not enforceable by law, the blueprint has influenced federal procurement guidelines, agency risk assessments, and sector-specific AI governance initiatives. It reflects the U.S. government’s broader strategy of promoting trustworthy AI through voluntary standards, secure design, and public engagement.

Canada: Artificial Intelligence and Data Act (AIDA)

AIDA focuses on high-impact AI systems, requiring risk assessments, transparency, human oversight, and robustness to ensure safety and accountability.

The act aims to protect individuals by aligning with existing Canadian legal frameworks like privacy, consumer protection, and human rights legislation, ensuring responsible development and use of AI technologies.

China: Generative AI Regulation

China’s regulatory framework for generative artificial intelligence is anchored in the Interim Measures for the Management of Generative Artificial Intelligence Services (“AI Measures”), which took effect on August 15, 2023. These measures mark China’s first administrative regulation directly targeting generative AI and are enforced by a coalition of state agencies led by the Cyberspace Administration of China.

The AI Measures apply to all organizations providing generative AI services to the public within China, regardless of their country of incorporation. While sector-agnostic in scope, the rules interact with other AI-relevant laws such as the Cybersecurity Law, Personal Information Protection Law (PIPL), and various sector-specific guidelines in finance, healthcare, and automotive industries.

It states that providers must maintain the security and reliability of their systems. In addition, providers are required to prevent the generation of illegal or harmful content.

The measures mandate lawful data use, requiring providers to obtain user consent, respect intellectual property, and ensure the legal sourcing of training data.

OECD AI Principles

The OECD’s Recommendation on Artificial Intelligence — adopted in 2019 and updated in 2023 and 2024 — is the first intergovernmental standard promoting trustworthy AI. It establishes five principles for responsible AI development and five recommendations for national and international action. These principles aim to ensure AI systems are human-centric, trustworthy, and aligned with democratic values and human rights.

Principles for Trustworthy AI

Inclusive growth, sustainable development, and well-being: AI should benefit people and the planet by improving human capabilities, promoting inclusion, reducing inequality, and supporting environmental sustainability.Respect for the rule of law, human rights, and democratic values: AI actors must uphold freedoms, dignity, equality, and rights throughout the AI lifecycle. Mechanisms should protect against misuse and ensure human oversight.Transparency and explainability: Stakeholders should understand how AI systems operate. Providers must disclose information about data sources, logic, and decision-making processes in a clear and context-appropriate way, enabling users to challenge outputs where needed.Robustness, security, and safety: AI systems should function reliably in various conditions and be designed to prevent and mitigate harm. Systems must be overridable or decommissioned when needed and support information integrity.Accountability: AI actors are responsible for ensuring systems function correctly and in line with these principles. They must enable traceability of data and decisions, apply risk management at all lifecycle stages, and collaborate with other stakeholders to address issues like bias and rights violations

The Data Silo Problem

Risk data is often scattered across HR systems, financial databases, IT logs, and third-party portals. Risk management as is popularly done relies on people manually gathering this data, which leads to risk assessments that are out of date by the time they are reviewed. Hence the need for continuous and an automated risk management.

The Objective Reality of AI

Unlike humans, AI does not suffer from “fatigue.” It can analyze millions of data points with consistent objectivity, identifying the patterns of anomaly , non-compliance and non-conformity that would be invisible to even the most seasoned auditor.

AI Technologies for GRC Teams

To implement AI effectively, GRC teams must understand the tools at their disposal. We are moving beyond simple automation into the realm of cognitive computing.

Natural Language Processing (NLP)

NLP is the “translator” between human language and machine action. For GRC, this means the ability to ingest large legal documents and extract specific obligations in a short time.

NLP identifies the “who, what, and when” of a regulation, allowing for automated policy updates.

Machine Learning (ML) and Anomaly Detection

ML models thrive on historical data. By feeding an ML model three years of “clean” financial and operational data, it learns the baseline of “normal” behavior.

When a transaction occurs that deviates from this baseline, even slightly, the system flags it for review. This is the heart of modern risk management.

Generative AI (GenAI)

The newest frontier for GRC teams is GenAI. While it must be used cautiously, it is exceptionally good at drafting policy language, summarizing audit reports, and creating “Plain English” explanations of complex compliance requirements for non-expert employees.

Practical AI Use Cases for GRC Teams

The integration of AI into risk management isn’t about replacing human judgment; it is about providing humans with higher-quality data and more time for strategic decision-making. Here are seven practical use cases for modern GRC teams.

Automated Regulatory Change Management

The Challenge: Global organizations operate across a fragmented landscape of local, federal, and international laws. Manually tracking updates from bodies like the SEC, the European Commission, or the MAS involves thousands of hours of legal review. By the time a human identifies a change, the organization may already be in a period of non-compliance.

The AI Solution: Using Natural Language Processing (NLP), Regulatory technology “RegTech” platforms ingest real-time feeds from government portals and legal databases. The AI wouldn’t just “read” the text; it would classify it by “intent.” It then identifies whether a change is an amendment to an existing obligation or a brand-new mandate.

The “Gap Analysis” Mechanic: The AI automatically compares the new regulatory text against your existing internal control framework. If a new data privacy law requires a 72-hour breach notification and your current policy says 96 hours, the AI flags this specific discrepancy.

Technical Benefit: This shifts the compliance lifecycle from a batch process (monthly reviews) to a stream process (real-time updates), reducing the time between a legal change and a control update from months to minutes.

Dynamic Third-Party Risk Management (TPRM)

The Challenge: Traditional TPRM relies on “point-in-time” assessments. A vendor might look secure during an annual audit in March, but suffer a major breach a month later. GRC teams are often the last to know, leaving the supply chain vulnerable.

The AI Solution: AI enables Continuous Monitoring by aggregating unstructured data from across the web.

Cyber Intelligence: Scans the dark web for leaked employee credentials or database dumps belonging to your vendors and threat intelligence tools.Sentiment & Financial Analysis: Uses NLP to analyze news reports and financial filings for “signals of distress,” such as sudden layoffs or litigation.

Risk Impact: This provides a “Risk Velocity” metric. If a critical cloud provider has a security incident, your GRC team is alerted within minutes via an automated trigger, allowing you to move data or switch to failover systems before the impact becomes catastrophic.

Predictive Operational Risk Modeling

The Challenge: Most risk management is lagging; it tells you what went wrong yesterday. Static risk mapping are often based on subjective metrics from decision makers.

The AI Solution: Predictive Analytics and Monte Carlo Simulations transform risk into a mathematical probability. By feeding the AI historical data, such as system downtime, employee turnover rates, economic indicators, and even weather patterns, the model runs thousands of “what-if” scenarios.

The Mechanic: The AI identifies correlations that humans might miss, such as a correlation between high employee burnout in a specific region and an increase in data entry errors that lead to financial risk.

Strategic Value: This allows leadership to practice “Proactive Capital Allocation.” Instead of setting aside funds for a disaster, you invest in the specific controls (like hiring or system upgrades) that the AI predicts will most effectively lower the “Value at Risk” (VaR).

Audit Coverage (The End of Sampling)

The Challenge: Due to time constraints, internal auditors use statistical sampling, checking perhaps 100 out of 1,000 invoices. This leaves a blind spot where non-conformity or errors can hide.

The AI Solution: AI-enabled audit tools utilize Machine Learning (ML) Anomaly Detection to process the entire population of data.

Transaction Analysis: The AI scans 100% of general ledger entries, T&E (Travel and Expense) reports, and system access logs.Pattern Recognition: It looks for “Benford’s Law” deviations or unusual “time-of-day” entries (e.g., an accountant approving a massive wire transfer at 3:00 AM on a Sunday).

The Result: “Total Visibility.” The audit shifts from a “detective” control to a “deterrent” control. When employees know every transaction is being screened by AI, the rate of “accidental” non-compliance drops significantly.

AI-Powered Internal Policy Management

The Challenge: Most corporate policies are buried in 100-page PDFs on a dusty intranet. When employees find them too difficult to navigate, they make guesses, leading to “shadow compliance” or accidental violations.

The AI Solution: Implementing a Generative AI (GenAI) interface, often called a “Compliance Bot,” trained exclusively on your internal policy documents.

Semantic Search: An employee can ask, “Can I accept a $200 dinner from a vendor in Singapore?” The AI cross-references the Global Anti-Bribery Policy with the regional Singapore annex and provides an instant answer.

Culture Impact: This democratizes risk management. It moves compliance out of the legal department and into the hands of the frontline staff, fostering a culture of “Compliance by Design.”

Automated Evidence Collection for SOC2, HIPAA, and ISO 27001

The Challenge: The “Evidence Scramble” is the most hated part of GRC. It involves dozens of people spending weeks taking screenshots of AWS configurations, Jira tickets, and HR onboarding logs to prove to auditors that controls are working.

The AI Solution: API-driven AI agents create a persistent “Evidence Data Lake.”

Automated Verification: Instead of a human checking if “Multi-Factor Authentication is enabled for all users” once a year, the AI agent checks the configuration via API every hour.Timestamping & Storage: The AI automatically captures the proof, timestamps it, and maps it to the relevant SOC2 or ISO control.

Efficiency Gain: This transforms the audit from a “fire drill” into a “non-event.” When the external auditor arrives, the GRC team simply provides access to the pre-populated evidence vault, reducing audit prep time by up to 80%.

7. Fraud Detection and AML (Anti-Money Laundering)

The Challenge: Money laundering often involves “layering”, moving money through a complex web of accounts to hide its origin. Traditional rule-based systems (e.g., “flag any transfer over $10k”) are easily circumvented by “smurfing” or smaller, frequent transfers.

The AI Solution: Graph Neural Networks (GNNs). Unlike traditional databases, GNNs focus on the relationships between data points.

The Mechanic: The AI maps the entire network of transactions. It can detect a “Cycle” (Money moving from Person A to B to C and back to A) or a “Hub and Spoke” pattern that is a hallmark of criminal activity.

Compliance Benefit: This significantly reduces “False Positives”, the bane of AML teams , while catching sophisticated “low-and-slow” fraud patterns that would never trigger a standard rule-based alarm.

Roadmap for the Implementation of AI

For GRC teams ready to start, the implementation should be handled in phases to ensure data integrity and team buy-in.

Phase 1: The Data Foundation

AI is only as good as the data it has consumed.

Audit your data: Where is your risk data stored? Is it secure?Centralization: Move from disconnected spreadsheets to a centralized GRC platform that supports API integrations.Standardization: Ensure that “Risk Level High” means the same thing in the IT department as it does in the Finance department. Establishing a quantifiable metric for risk levels is

Phase 2: The Pilot Program

Don’t try to automate everything at once. Pick a low-impact, low-complexity task.

Recommended Pilot: Third-Party Risk or Regulatory Tracking.Goal: Demonstrate that the AI can catch a risk that a human missed, or save a specific number of work hours.

Phase 3: Integration and “Human-in-the-Loop”

AI should augment humans, not replace them.

Define the Workflow: The AI identifies a potential risk, but a human GRC professional must “adjudicate” it.Feedback Loops: When a human corrects the AI (e.g., “This isn’t actually a fraud risk”), the system learns from that correction to improve future accuracy.

Phase 4: Scaling and Governance

Once the pilot is successful, scale to other risk domains (Cyber, ESG, Finance).

Establish an AI Ethics Committee: Monitor your own AI for bias. Ensure the models are “Explainable” (XAI) so you can tell a regulator exactly why the AI made a certain decision.

Managing the Challenges of AI in GRC

Implementing AI for risk management is not without its pitfalls. GRC teams must be prepared for three specific challenges:

The “Black Box” Problem

In highly regulated industries like banking or healthcare, “the AI told me so” is not an acceptable answer for a regulator.

The Solution: Prioritize “Explainable AI.” Choose vendors that provide a clear trail of logic for every risk flag.

Algorithmic Bias

If your training data contains historical biases (e.g., in hiring or lending), the AI will replicate and accelerate those biases.

The Solution: Regular “Bias Audits” of your AI models. GRC teams should treat their own AI systems as “high-risk vendors” that require constant oversight.

Data Privacy and Security

Sending sensitive corporate data to a public AI model (like the free version of ChatGPT) is a massive risk.

The Solution: Use private, “Single-Tenant” AI instances. Ensure that your data is not being used to train the provider’s general model.

The Strategic ROI of AI-Enabled Risk Management

The return on investment for AI in GRC is measured in three ways:

Cost Avoidance

Avoiding a single multi-million dollar GDPR fine or a ransomware payout pays for the AI implementation ten times over.

Operational Velocity

When compliance is automated, the business can move faster. New products can be launched in new countries without waiting six months for a manual compliance review.

Strategic Focus

GRC professionals stop being “data hunters” and start being “risk strategists.” They spend their time advising the CEO on high-level strategy rather than chasing down audit logs.

Conclusion

For GRC teams, the implementation of AI is a journey, not a destination. As the technology evolves, so will the risks it manages. The organizations that thrive in the coming decade will be those that treat AI as a core member of their risk management team.

By automating the mundane, predicting the unforeseen, and providing 100% visibility into compliance, AI will transform GRC from a “necessary evil” into a powerful engine for organizational trust and resilience.

Automation Series: Theory of Implementing AI in Risk Management was originally published in Coinmonks on Medium, where people are continuing the conversation by highlighting and responding to this story.