Cross-Validation — The Missing Piece in My Machine Learning Workflow

(From Confused Developer to Building Real ML Systems — Part 12)

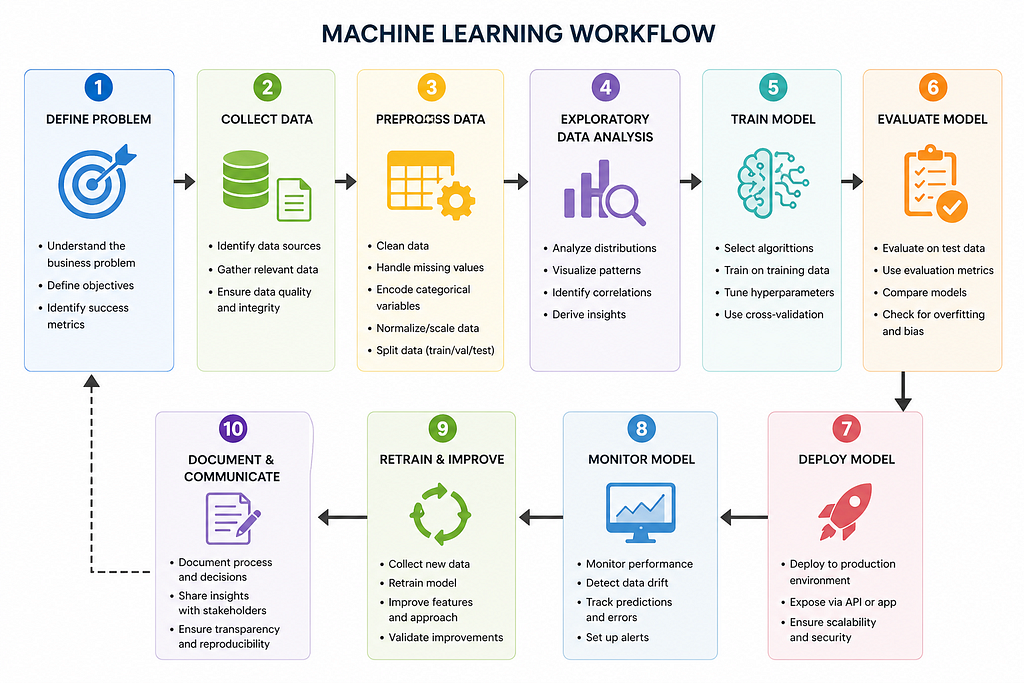

ml workflow

I thought I finally got it right.

Clean data ✅Tuned model ✅Great accuracy ✅

Everything looked perfect.

Until…

I ran the model again.

And got a completely different result.

😕 The Problem I Couldn’t Explain

Same dataset.

Same code.

But:

Accuracy changedPredictions shiftedResults felt unreliable

That’s when I realized:

My model wasn’t stable.

🧠 The Question That Changed Everything

I asked:

“What if my train-test split is misleading me?”

And that’s when I discovered:

Cross-Validation…

🔍 What Is Cross-Validation (Simple Idea)

Instead of splitting data once:

👉 Split it multiple times

👉 Train & test multiple times

👉 Average the results

💡 Real-Life Analogy

Imagine judging a student:

One exam → not reliable ❌Multiple exams → better judgment ✅

👉 That’s cross-validation

⚡ Interactive Moment (Think First)

👉 Which is more reliable?

One train-test split5 different splits averaged

👇 You already know the answer 😉

💻 The Problem with One Split

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2)

model.fit(X_train, y_train)

print(model.score(X_test, y_test))

👉 Result depends on how data is split

💻 The Fix: Cross-Validation

from sklearn.model_selection import cross_val_score

scores = cross_val_score(model, X, y, cv=5)

print(“Scores:”, scores)

print(“Average Score:”, scores.mean())

👉 More stable

👉 More reliable

😲 What Changed for Me

Before:

Results were inconsistentHard to trust model

After:

Stable performanceClear understanding of model quality

🔥 What Is K-Fold (Simple Breakdown)

If cv = 5:

Data is split into 5 partsModel trains 5 timesEach part gets a chance to be test data

👉 Final score = average of all

🧠 The Breakthrough Moment

When I saw:

Individual scoresAverage score

I finally understood:

“My model is not as good as I thought… but now I know the truth………

🎯 The Framework I Follow Now (SEO GOLD)

✔ Step 1: Train model

✔ Step 2: Use cross_val_score

✔ Step 3: Check mean score

✔ Step 4: Check variation

⚠️ Common Mistakes to Avoid

❌ Trusting a single test score

❌ Ignoring variance in results

❌ Using CV incorrectly on time-series data

💡 Pro Insight

High average + low variation → good model ✅High variation → unstable model ⚠️

🔥 Your Turn (Interactive Challenge)

Try this:

Train your model normallyApply cross-validationCompare results

Ask yourself:

“Was my original score misleading?”

🚀 Why This Matters (Real World)

In real systems:

Data changesPatterns shiftModels degrade

👉 Cross-validation prepares you for that

🔗 What’s Next

Now your model is stable…

It’s time to take the next leap:

Feature Engineering — The Real Power Behind Great Models

👇 Continue the Series

If you’re serious about mastering ML:

👉 Follow this journey

👉 Learn what actually works

Next Part: I Didn’t Change My Model… I Changed My Features 🚀

🔍 SEO Keywords (Naturally Embedded)

cross validation in machine learning, k fold cross validation sklearn, why cross validation is important, improve model reliability, machine learning model evaluation techniques

💬 Final Thought

Stop trusting one result.

Start asking:

“Is my model consistently good?”

My Model Worked… Until It Didn’t — This One Trick Fixed It was originally published in Coinmonks on Medium, where people are continuing the conversation by highlighting and responding to this story.