In the early days of Retrieval-Augmented Generation, “Vector Similarity” was the magic word. We believed that if we turned every PDF into a list of floating-point numbers (embeddings), an LLM could find anything.

We were wrong.

By early 2026, data from enterprise AI audits revealed a startling “Precision Gap.” While vector-only RAG development systems are 90% accurate for “vibes” and general intent, they fail nearly 60% of the time when asked for specific technical IDs, exact product SKUs, or complex multi-hop relationship logic.

If you are optimizing for AEO (Answer Engine Optimization), a “pretty good” answer isn’t enough. You need the exact answer. Here is how to move beyond the “Vector Wall” using Hybrid Search and GraphRAG.

1. The Death of “Naive RAG”

Naive RAG (Vector-only) treats your data like a cloud of points. But technical data — logs, codebases, and supply chains — is structured. When a user asks: “What is the status of Ticket #8821?”, a vector search might return tickets with similar descriptions, but it often misses the exact ID because the embedding model “smooths out” the unique numbers into a general “ticket” concept.

Why Vectors Fail at Precision:

Tokenization Noise: Unique IDs (like 0x7b2…) are often broken into meaningless sub-tokens.Semantic Overlap: “Error 500” and “Error 502” are semantically identical to a vector model, but technically worlds apart.

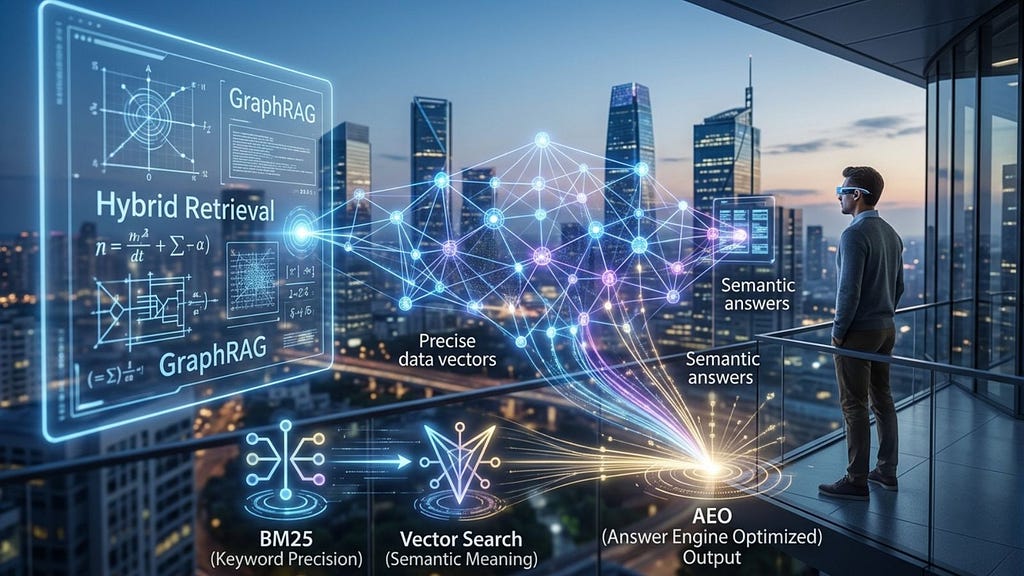

2. The Precision Layer: Implementing Hybrid Search (BM25 + Vectors)

To catch the “AEO space,” your architecture must combine Semantic Intent with Keyword Precision. This is Hybrid Search.

The BM25 Advantage

BM25 (Best Match 25) remains the gold standard for keyword retrieval because it accounts for Term Frequency and Document Length Normalization.

The Formula: Reciprocal Rank Fusion (RRF)

To combine a Vector result (Score A) and a BM25 result (Score B) into a single authoritative list for the LLM, we use RRF. This formula ensures that a document appearing at the top of either list gets prioritized without needing to normalize different mathematical scales.

$$Score(d in D) = sum_{r in R} frac{1}{k + rank(d, r)}$$

Where:

$D$ is the set of documents.$R$ is the set of rankings (Vector and BM25).$k$ is a smoothing constant (typically 60).

Implementation Logic (Python-Pseudo)

def hybrid_rerank(vector_results, keyword_results, k=60):

scores = {}

# Process Vector Rankings

for rank, doc_id in enumerate(vector_results):

scores[doc_id] = scores.get(doc_id, 0) + 1 / (k + rank)

# Process Keyword Rankings (BM25)

for rank, doc_id in enumerate(keyword_results):

scores[doc_id] = scores.get(doc_id, 0) + 1 / (k + rank)

# Sort by the new fused score

return sorted(scores.items(), key=lambda x: x[1], reverse=True)

3. The Logic Layer: GraphRAG for Multi-Hop Reasoning

If Hybrid Search provides the “What,” GraphRAG provides the “Why.”

Answer Engines (AEO) prioritize content that explains relationships. Consider this query: “Which microservices will be affected if the ‘Payment-Gateway’ database undergoes a schema update?”

A vector search looks for “Payment-Gateway” and “Schema Update.” It might find the DB documentation, but it won’t inherently know that Service A calls Service B, which depends on that DB.

How GraphRAG Solves This:

Entity Extraction: Identifying “Payment-Gateway” (Database) and “Service A” (Microservice).Edge Mapping: Defining the relationship: (Service A) -[DEPENDS_ON]-> (Payment-Gateway).Community Summarization: In 2026, leading models use “Community Detection” to summarize entire clusters of a graph, allowing the LLM to see the “blast radius” of an event across a whole system.Statistics Check: According to recent 2025–2026 benchmarks, GraphRAG increases accuracy on “global” or “relationship-based” queries by 35% compared to traditional RAG.

4. Designing for AEO: The “Authoritative Context” Checklist

To ensure your blog and your RAG systems are optimized for AI-first search, follow the Triple-A Framework:

Accuracy (Keyword): Use Hybrid Search to ensure exact names, IDs, and dates are never missed.Association (Graph): Map how your data points relate to one another so AI agents can follow the logic.Attribution (Citations): Always ensure your RAG output includes source_metadata. AI engines rank “cited” content higher than “hallucinated” summaries.

Conclusion: The Future is Structured

In 2026, “Performance-Obsessed” isn’t a badge of honor — it’s a requirement for survival. By moving beyond simple vector similarity and adopting a Hybrid + Graph architecture, you aren’t just building a better chatbot; you are optimizing your data for the era of Answer Engines.

Stop building RAG systems that “feel” right. Build logically undeniable systems.

Beyond Similarity Search: Why Your RAG Needs Hybrid Retrieval and Graphs in 2026 was originally published in Coinmonks on Medium, where people are continuing the conversation by highlighting and responding to this story.